Robot Learning

- DreMa: Compositional World Models ICLR 2025

- CaPo: Cooperative Plan Optimization ICLR 2025

- Graph Switching Dynamical Systems ICML 2023

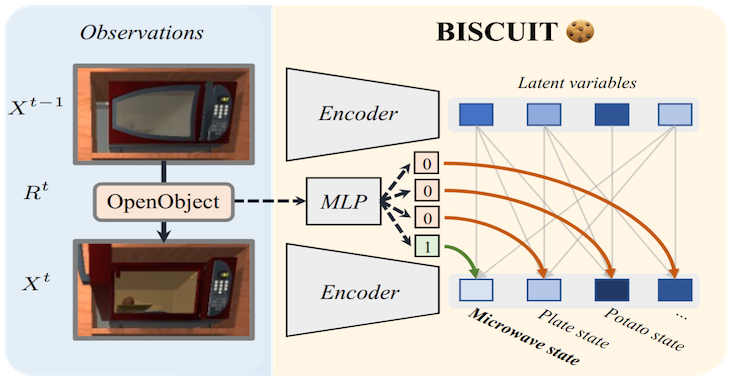

- BISCUIT: Causal Rep. Learning UAI 2023

- CITRIS: Causal Identifiability ICML 2022

- ENCO: Neural Causal Discovery ICLR 2022

& Safety

- Mechanistic Neural Networks ICML 2024

- Mech. Interpretability for AI Safety TMLR 2024

- Modulated Neural ODEs NeurIPS 2023

for Biomedical

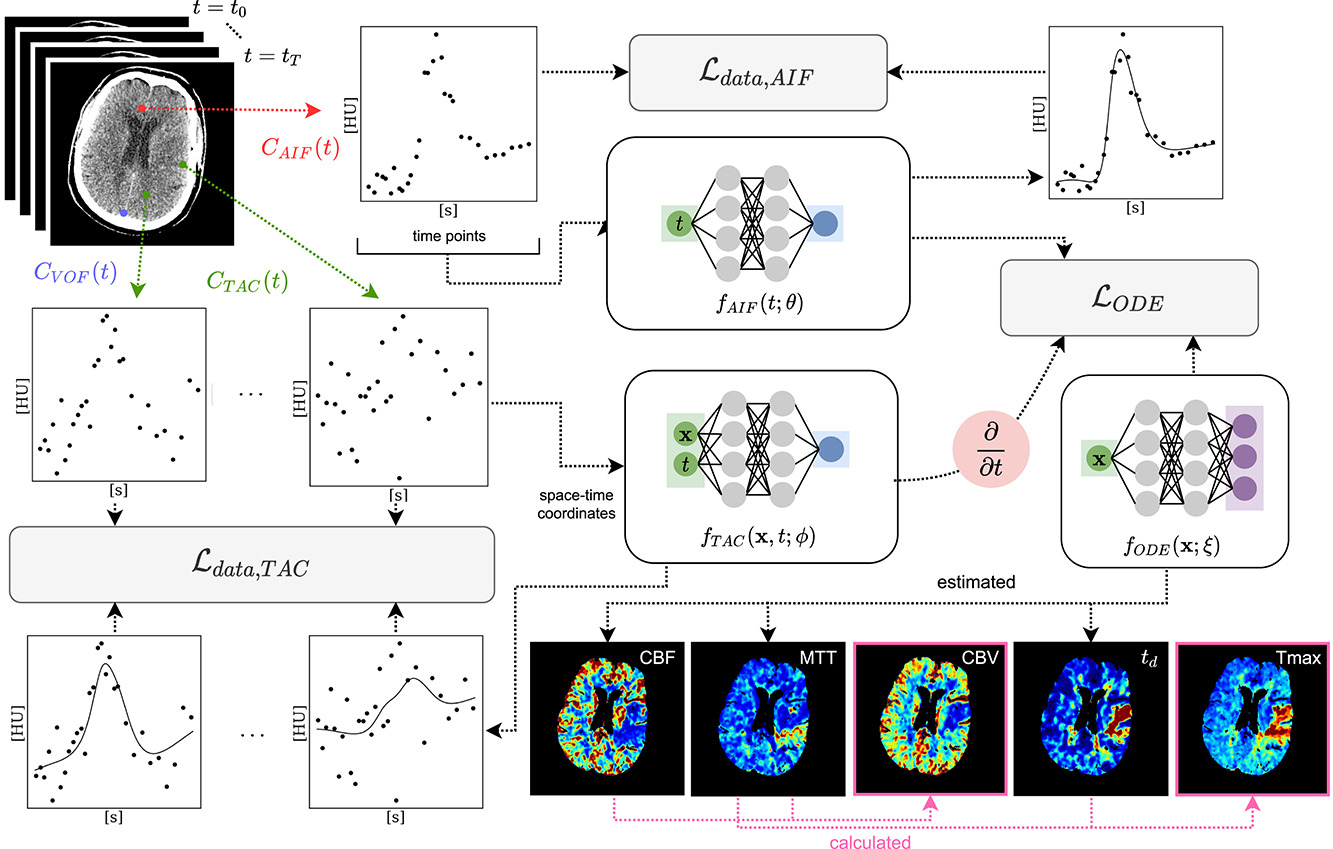

- Physics-Inf. NFs for CT Perfusion MIDL 2024 ★

- Spatio-temporal Physics-Informed MeDIA 2023

- VISA: Video Object Segmentation ECCV 2024